NLP Roadmap 2023 with Free Resources.

This is what you need to build real-world NLP Projects and a Good Foundation.

🚀 I'm a Top Rated Plus NLP freelancer on Upwork with over $300K in earnings and a 100% Job Success rate. This journey began in 2022 after years of enriching experience in the field of Data Science. 📚 Starting my career in 2013 as a Software Developer focusing on backend and API development, I soon pursued my interest in Data Science by earning my M.Tech in IT from IIIT Bangalore, specializing in Data Science (2016 - 2018). 💼 Upon graduation, I carved out a path in the industry as a Data Scientist at MiQ (2018 - 2020) and later ascended to the role of Lead Data Scientist at Oracle (2020 - 2022). 🌐 Inspired by my freelancing success, I founded FutureSmart AI in September 2022. We provide custom AI solutions for clients using the latest models and techniques in NLP. 🎥 In addition, I run AI Demos, a platform aimed at educating people about the latest AI tools through engaging video demonstrations. 🧰 My technical toolbox encompasses: 🔧 Languages: Python, JavaScript, SQL. 🧪 ML Libraries: PyTorch, Transformers, LangChain. 🔍 Specialties: Semantic Search, Sentence Transformers, Vector Databases. 🖥️ Web Frameworks: FastAPI, Streamlit, Anvil. ☁️ Other: AWS, AWS RDS, MySQL. 🚀 In the fast-evolving landscape of AI, FutureSmart AI and I stand at the forefront, delivering cutting-edge, custom NLP solutions to clients across various industries.

Text Pre-Processing (Use #spacy):

Tokenization

Lemmatization

Removing Punctuations and Stopwords etc.

Tokenization is breaking a text into smaller pieces or tokens. Lemmatization is the process of finding the lemma, or root form, of a word. Removing punctuations and stopwords is removing unnecessary punctuation and words from a text.

Text Representation Techniques (Feature Engineering):

Bag of Words, Count Vector - #Sklearn

TFIDF - #Sklearn

Word2Vec - #Gensim

Bag of Words and Count Vector is text representation techniques used in feature engineering. TFIDF is a technique used to calculate the importance of a word in a document. Word2Vec is a technique that is used to create vector representations of words.

📌 Task:

Build Text Classification model using algorithms like Logistic Regression, Random Forest, Xgboost, etc., and features from Count Vector, TFIDF, and Word2Vec.

Learn Neural Networks and Deep Learning (Irrespective of whether you want to learn NLP or Computer Vision)

Try Hands-on with Pytorch or Tensorflow.

Neural networks and deep learning are two important concepts in machine learning. Neural networks are a type of machine learning algorithm that is used to model complex patterns in data. Deep learning is a type of neural network used to learn complex patterns in data.

Information Extraction (Use Spacy):

POS tagging assigns a part-of-speech tag to each word in a sentence.

The dependency parser finds the dependencies between words in a sentence.

Named Entity Recognition identifies and classifies named entities in a text.

📌 Task:

Learn how to use a pre-trained model from #Spacy for #NER. How to build a custom NER model.

Transfer Learning and Transformers Overview:

Transfer learning is a technique for training machine learning models on data similar to the data used to train the pre-trained model. This can be done by fine-tuning the weights of the pre-trained model on the new data.

Transformers are a type of neural network used for transfer learning. They are trained on large datasets and can be used to learn features from new data.

Learn How to fine-tune transformer models like BERT on Custom Dataset.

Learn How to push fine-tuned model to the hugging face model hub and load it into your deployment environment

Deploy Machine Learning Model:

Integrate your NLP ML model into Streamlit and deploy it on the Streamlit cloud (or Heroku)

Expose Model as Rest API using.

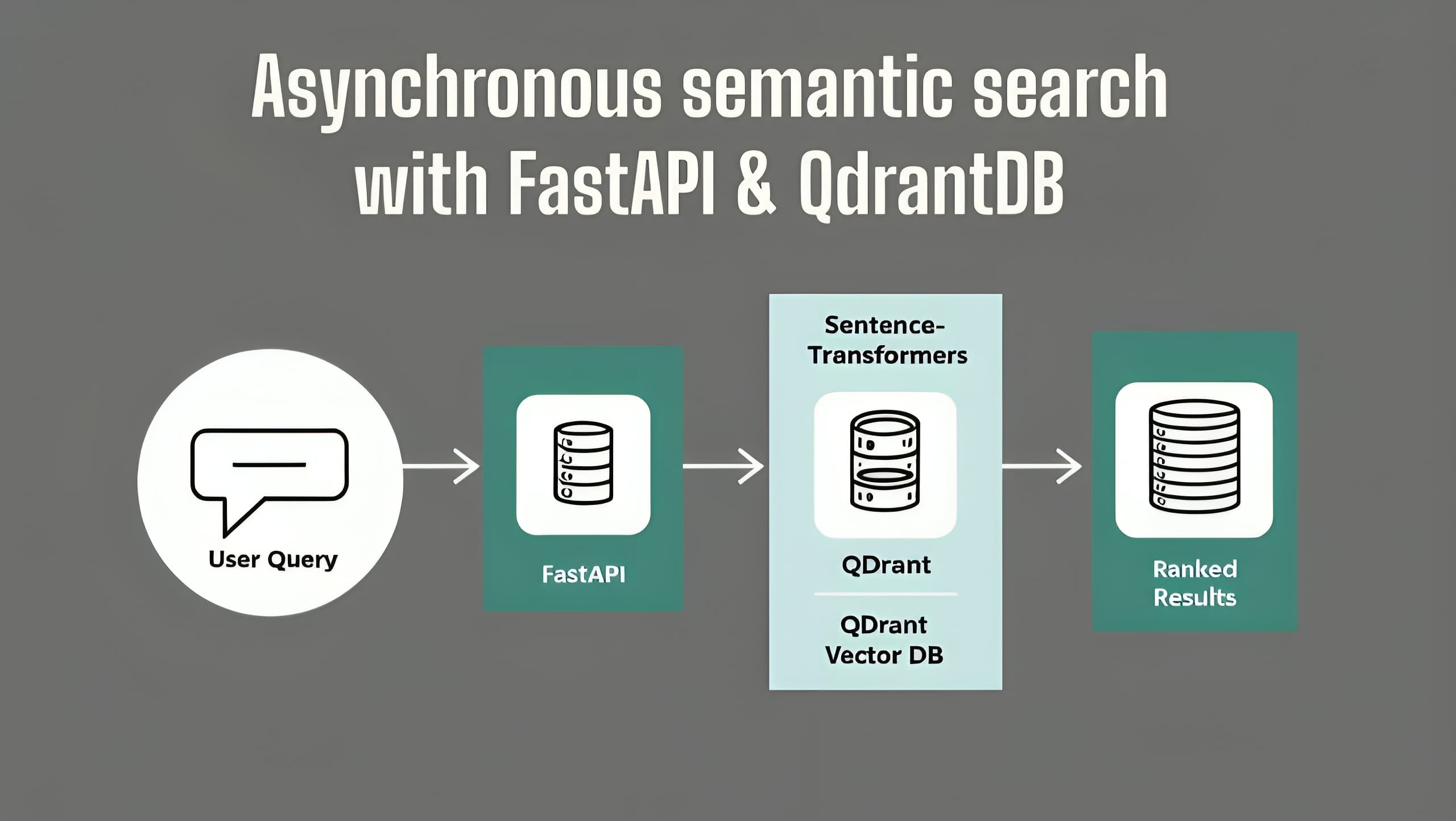

Use FastAPI or Flask and deploy it on AWS Cloud.

Sentence Transformers

Generate Sentence Embedding using Sentence Transformers: Sentence Transformers is a library that allows for the generation of sentence embeddings. These embeddings can then be used for tasks such as clustering documents or performing a semantic search.

Use Sentence embedding for clustering documents: Sentence embeddings can be used to cluster documents to group them by similarity. This can be useful when trying to organize a large collection of documents.

Use Sentence embedding for semantic search: Sentence embeddings can also be used to improve the results of semantic search engines. By representing documents as vectors, it is possible to compare them more accurately and find the most relevant results.

Build a classification model using Sentence Transformers' features and fit it to algorithms like Random Forest and Xgboost.

Build NLP products using Language models like GPT-3

Learn how to use #gpt3 playground to check feasibility (GPT-3 prompt design).

** Integrate GPT-3 Prompt into code.**

Once you have integrated your prompt, you can fine-tune it to get the desired results.

Fine-tune GPT-3

🎯 Solve popular NLP Tasks:

Text Classification.

Sentiment Analysis (Aspect Based Sentiment Analysis).

Document Clustering.

Topic Modeling.

Named Entity Recognition. 👏 Additionally:

Semantic Search.

Question Answering.

Conversational AI (Chatbot).

If you want to stay up-to-date on the latest AI tools and technologies, it's essential to visit AIDemos.com. Our website is a valuable resource for anyone interested in discovering the potential of AI. With video demos, you can explore the latest AI tools and gain a better understanding of what is possible with AI. Our goal is to educate and inform about the many possibilities of AI.

Don't miss out; visit AIDemos.com today!

Pradip Nichite:

I am a Freelance Data Scientist working on Natural Language Processing (NLP) and building end-to-end NLP applications.

I Share Practical hands-on tutorials on NLP and Bite-sized information and knowledge related to Artificial Intelligence.

LinkedIn: https://www.linkedin.com/in/pradipnichite/