Building a Custom NER Model with SpaCy: A Step-by-Step Guide

In today's data-driven world, extracting and understanding named entities from text is crucial for various natural language processing tasks. Named Entity Recognition (NER) is a subtask of information extraction that aims to identify and classify named entities such as names, organizations, locations, dates, and more. While SpaCy provides a powerful pre-trained NER model, there are situations where building a custom NER model becomes necessary. This blog post will guide you through the process of building a custom NER model using SpaCy, covering data preprocessing, training configuration, and model evaluation.

I. Understanding NER and the Need for Custom NER:

Named Entity Recognition (NER) is a subtask of natural language processing that focuses on identifying and classifying named entities within the text. Named entities refer to specific types of commodities such as person names, organization names, locations, dates, numerical values, and more. NER is vital in various applications, including information extraction, question answering, chatbots, sentiment analysis, and recommendation systems.

SpaCy, a popular Python library for NLP, provides pre-trained NER models that perform well on general domains. These models are trained on large corpora and can recognize common entity types. However, there are scenarios where building a custom NER model becomes necessary. Here are some reasons why you might need to build a custom NER model:

Domain-specific entity recognition: General-purpose NER models may struggle to identify entities in domain-specific texts accurately. For instance, if you're working in the medical domain, you may need to recognize medical terms, drug names, and specific medical conditions. Building a custom NER model allows you to train it on domain-specific data, improving the recognition of entities relevant to your field.

Custom entity types: Pre-trained NER models are typically trained to recognize common entity types such as a person, organization, and location. However, your application may require identifying unique entity types specific to your domain. By creating a custom NER model, you can define and train it to recognize these specific entity types effectively.

Adaptation to new entity types: Language is dynamic, and new entity types emerge over time. Pre-trained NER models might not be aware of these newly emerged entity types. Building a custom NER model allows you to incorporate these new entity types by providing labeled data and training the model accordingly.

Limited labeled data: In some cases, you might have a limited amount of labeled data available. Pre-trained models may not generalize well with limited training data. By building a custom NER model, you can fine-tune it on your limited labeled data, resulting in better performance specific to your dataset.

In summary, while pre-trained NER models offer a great starting point, building a custom NER model becomes necessary to address domain-specific challenges, recognize custom entity types, adapt to new entity types, and make the most of limited labeled data. By customizing the NER model using SpaCy, you can enhance its performance and achieve more accurate and context-specific named entity recognition.

II. Building and Training a Custom NER Model with SpaCy:

A. Introduction to SpaCy's Named Entity Recognition (NER)

Importing the required libraries and downloading SpaCy models:

import spacy !python -m spacy download en_core_web_lg nlp = spacy.load("en_core_web_lg")Here, we import the

spacylibrary. Then, we download theen_core_web_lgmodel using the command!python -m spacy download en_core_web_lg. After downloading, we load the model usingspacy.load("en_core_web_lg")and assign it to the variablenlp.Creating a SpaCy

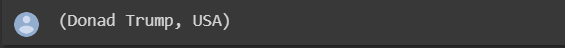

Docobject:doc = nlp("Donald Trump was President of USA")We create a

Docobject by passing a text string to the loaded SpaCy model (nlp). This text is processed by the NLP pipeline, and the resulting document is assigned to the variabledoc.Accessing the entities in the document:

doc.entsThe

entsattribute of theDocobject contains the named entities recognized in the document.Output:

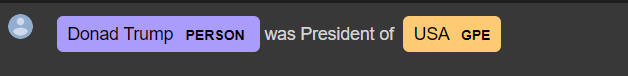

Visualizing the entities using SpaCy's

displacy:from spacy import displacy displacy.render(doc, style="ent", jupyter=True)This code imports the

displacymodule from SpaCy and renders the named entities in the document using the "ent" (entity) style.

B. Enhancing NER through Kgalle DB for Fine-tuning:

Loading training data from a JSON file:

Data link: https://www.kaggle.com/datasets/finalepoch/medical-ner

import json with open('/content/Corona2.json', 'r') as f: data = json.load(f)Here, we import the

jsonlibrary and load training data from a JSON file named "Corona2.json" using thejson.load()function. The loaded data is stored in thedatavariable.Preparing training data in SpaCy format:

training_data = [] for example in data['examples']: temp_dict = {} temp_dict['text'] = example['content'] temp_dict['entities'] = [] for annotation in example['annotations']: start = annotation['start'] end = annotation['end'] label = annotation['tag_name'].upper() temp_dict['entities'].append((start, end, label)) training_data.append(temp_dict)This code prepares the training data in the required format for SpaCy. It iterates over the examples in the loaded data, extracts the text and annotations (start position, end position, and label) for each example, and appends them to a list called

training_data.Output:

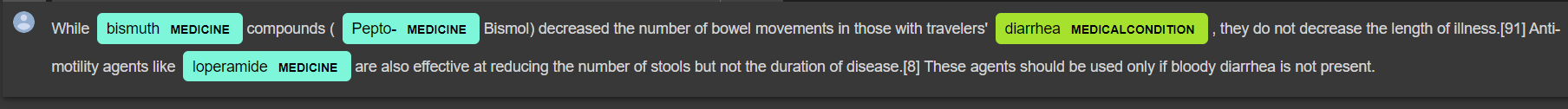

{'text': "While bismuth compounds (Pepto-Bismol) decreased the number of bowel movements in those with travelers' diarrhea, they do not decrease the length of illness.[91] Anti-motility agents like loperamide are also effective at reducing the number of stools but not the duration of disease.[8] These agents should be used only if bloody diarrhea is not present.[92]\n\nDiosmectite, a natural aluminomagnesium silicate clay, is effective in alleviating symptoms of acute diarrhea in children,[93] and also has some effects in chronic functional diarrhea, radiation-induced diarrhea, and chemotherapy-induced diarrhea.[45] Another absorbent agent used for the treatment of mild diarrhea is kaopectate.\n\nRacecadotril an antisecretory medication may be used to treat diarrhea in children and adults.[86] It has better tolerability than loperamide, as it causes less constipation and flatulence.[94]", 'entities': [(360, 371, 'MEDICINE'), (383, 408, 'MEDICINE'), (104, 112, 'MEDICALCONDITION'), (679, 689, 'MEDICINE'), (6, 23, 'MEDICINE'), (25, 37, 'MEDICINE'), (461, 470, 'MEDICALCONDITION'), (577, 589, 'MEDICINE'), (853, 865, 'MEDICALCONDITION'), (188, 198, 'MEDICINE'), (754, 762, 'MEDICALCONDITION'), (870, 880, 'MEDICALCONDITION'), (823, 833, 'MEDICINE'), (852, 853, 'MEDICALCONDITION'), (461, 469, 'MEDICALCONDITION'), (535, 543, 'MEDICALCONDITION'), (692, 704, 'MEDICINE'), (563, 571, 'MEDICALCONDITION')]}Converting training data to SpaCy

DocBinformat:from spacy.tokens import DocBin from tqdm import tqdm nlp = spacy.blank("en") doc_bin = DocBin() from spacy.util import filter_spans for training_example in tqdm(training_data): text = training_example['text'] labels = training_example['entities'] doc = nlp.make_doc(text) ents = [] for start, end, label in labels: span = doc.char_span(start, end, label=label, alignment_mode="contract") if span is None: print("Skipping entity") else: ents.append(span) filtered_ents = filter_spans(ents) doc.ents = filtered_ents doc_bin.add(doc) doc_bin.to_disk("train.spacy")In this section, we convert the training data to SpaCy's

DocBinformat, which is an efficient binary format for storingDocobjects. We initialize a blank English language model usingspacy.blank("en"), create an emptyDocBin, and iterate over each training example. For each example, we create aDocobject usingnlp.make_doc(text)and create spans for the entities. We filter the spans to remove overlapping entities usingfilter_spans, update theentsattribute of theDocobject, and add theDocto theDocBin. Finally, we save theDocBinto a file named "train. spacy".Initializing the training configuration:

!python -m spacy init fill-config base_config.cfg config.cfgThis command initializes a base configuration file named "base_config.cfg" and fills it with default settings. The filled configuration is saved as "config.cfg", which can be further customized for training the NER model.

Training the NER model:

!python -m spacy train config.cfg --output ./ --paths.train ./train.spacy --paths.dev ./train.spacyThis command trains the NER model using the specified configuration file ("config. cfg") and the training data from the "train.spacy" file. The trained model is saved in the current directory.

Loading the trained NER model and visualizing entities:

nlp_ner = spacy.load("model-best") doc = nlp_ner("While bismuth compounds (Pepto-Bismol) decreased the number of bowel movements in those with travelers' diarrhea, they do not decrease the length of illness.[91] Anti-motility agents like loperamide are also effective at reducing the number of stools but not the duration of disease.[8] These agents should be used only if bloody diarrhea is not present.") colors = {"PATHOGEN": "#F67DE3", "MEDICINE": "#7DF6D9", "MEDICALCONDITION": "#a6e22d"} options = {"colors": colors} spacy.displacy.render(doc, style="ent", options=options, jupyter=True)In this part, we load the trained NER model using

spacy.load("model-best"). Then, we process a sample text using the loaded model and store the result in thedocvariable. Finally, we define colors for entity types, specify options, and usedisplacy.renderthem to visualize the entities with their corresponding colors in the Jupyter Notebook.Output:

Note: Make sure to install the necessary dependencies (

tqdmandjson) if they are not already installed in your environment.

III. Conclusion and Next Steps: Empowering NLP Applications with Custom NER Models

In conclusion, this blog has provided a step-by-step guide on building a custom NER model using SpaCy. We have covered the importance of NER and the need for custom models in specific domains or with unique entity types. Additionally, we explored the process of preprocessing NER training data in SpaCy format and creating a training configuration to train and test the new model.

By following these steps, you can leverage the power of SpaCy to create a custom NER model that accurately identifies named entities relevant to your specific application or domain. Building a custom NER model allows you to enhance the performance and adaptability of your NLP applications, leading to more accurate and meaningful results.

To further enhance your custom NER model, you can consider the following next steps:

Fine-tuning and Optimization: Experiment with different hyperparameters, model architectures, and training strategies to improve the performance of your custom NER model. Fine-tuning the model on more labeled data or using transfer learning techniques can also be beneficial.

Data Augmentation: If you have limited labeled data, consider applying data augmentation techniques to generate synthetic training examples. This can help diversify and expand your training data, leading to better generalization.

Error Analysis and Iterative Refinement: Perform a thorough error analysis on your model's performance. Identify common patterns or challenging cases where the model struggles. Based on the analysis, iteratively refine your training data, model architecture, or training process to address these challenges.

Deployment and Integration: Once you have a trained and validated custom NER model, explore options for deploying it in your desired application or system. Consider integration with other components, frameworks, or APIs to utilize the model's predictions in real-world scenarios.

Remember, building an effective custom NER model requires an iterative process of experimentation, evaluation, and refinement. Stay updated with the latest research and advancements in NLP to incorporate new techniques and approaches into your workflow.

By following these guidelines and continuously improving your custom NER model, you can unlock the potential of named entity recognition for a wide range of applications, from information extraction to intelligent chatbots and beyond. Happy coding and NLP exploration!

To complement the concepts discussed in this blog, you can also check out this informative video tutorial on building a custom NER model with SpaCy:

The video provides visual demonstrations and additional insights into the implementation process.