Leveraging LinkedIn Data with GenAI: From Scraping to Personalized Outreach

Introduction:

In the expansive realm of professional data, LinkedIn stands out as an invaluable treasure trove, concealing its true gems beneath layers of text and profiles. Expanding upon the insights provided in 'A Comprehensive Guide to Scraping LinkedIn Data,' this article explores the impactful utilization of Large Language Models, such as GPT-4. It unequivocally demonstrates how these models possess the capability to transmute raw data scraped from LinkedIn into robust products. This transformation facilitates targeting potential users for your company through email marketing or crafting personalized messages for talent acquisition, among other compelling use cases. With a focus on GenAI applications using LinkedIn data, the article underscores the transformative potential of leveraging advanced language models in the professional landscape. We have chosen Python as the programming language.

1. Installing Dependencies:

%%capture

!pip install openai

2. Using ProxyCurl API:

We will be using ProxyCurl API to scrape the profile data of the target user. The data scraped will be further used for different use cases.

import requests

import time

api_key = 'Your ProxyCurl API_KEY' # Put your API Key here

headers = {'Authorization': 'Bearer ' + api_key}

api_endpoint = 'https://nubela.co/proxycurl/api/v2/linkedin'

params = {

'linkedin_profile_url': "https://www.linkedin.com/in/pradipnichite/",

'skills': 'include',

'use_cache': 'if-recent',

'fallback_to_cache': 'never',

}

start_time = time.time()

response = requests.get(api_endpoint,

params=params,

headers=headers)

end_time = time.time()

time_taken = end_time - start_time

print("Time taken:", time_taken)

data3 = response.json()

Response:

{'public_identifier': 'pradipnichite',

'profile_pic_url': 'https://s3.us-west-000.backblazeb2.com/proxycurl/person/pradipnichite/profile?X-Amz-Algorithm=AWS4-HMAC-SHA256&X-Amz-Credential=0004d7f56a0400b0000000001%2F20231230%2Fus-west-000%2Fs3%2Faws4_request&X-Amz-Date=20231230T045709Z&X-Amz-Expires=3600&X-Amz-SignedHeaders=host&X-Amz-Signature=f5d750fabd42a7722147553735236f0da5634b5d09459d61cd1d5304e841c72b',

'background_cover_image_url': 'https://s3.us-west-000.backblazeb2.com/proxycurl/person/pradipnichite/cover?X-Amz-Algorithm=AWS4-HMAC-SHA256&X-Amz-Credential=0004d7f56a0400b0000000001%2F20231230%2Fus-west-000%2Fs3%2Faws4_request&X-Amz-Date=20231230T045709Z&X-Amz-Expires=3600&X-Amz-SignedHeaders=host&X-Amz-Signature=146e3be4527725176d897603eca7b424a026ffeb7a8215be5257808836f9d339',

'first_name': 'Pradip',

'last_name': 'Nichite',

'full_name': 'Pradip Nichite',

'follower_count': 20901,

'occupation': 'Founder & Lead Data Scientist at FutureSmart AI',

'headline': 'Top Rated Plus - NLP Freelancer | Custom NLP Solutions | GPT-4 | AI Demos',

'summary': "🚀 I'm a Top Rated Plus NLP freelancer on Upwork with over $100K in earnings and a 100% Job Success rate. This journey began in 2022 after years of enriching experience in the field of Data Science.\n\nhttps://www.upwork.com/freelancers/pradipnichite\n\n📚 Starting my career in 2013 as a Software Developer focusing on backend and API development, I soon pursued my interest in Data Science by earning my M.Tech in IT from IIIT Bangalore, specializing in Data Science (2016 - 2018).\n\n💼 Upon graduation, I carved out a path in the industry as a Data Scientist at MiQ (2018 - 2020) and later ascended to the role of Lead Data Scientist at Oracle (2020 - 2022).\n\n🌐 Inspired by my freelancing success, I founded FutureSmart AI in September 2022. We provide custom AI solutions for clients using the latest models and techniques in NLP.\n\n🎥 In addition, I run AI Demos, a platform aimed at educating people about the latest AI tools through engaging video demonstrations.\n\n🧰 My technical toolbox encompasses:\n🔧 Languages: Python, JavaScript, SQL.\n🧪 ML Libraries: PyTorch, Transformers, LangChain.\n🔍 Specialties: Semantic Search, Sentence Transformers, Vector Databases.\n🖥️ Web Frameworks: FastAPI, Streamlit, Anvil.\n☁️ Other: AWS, AWS RDS, MySQL.\n\n🚀 In the fast-evolving landscape of AI, FutureSmart AI and I stand at the forefront, delivering cutting-edge, custom NLP solutions to clients across various industries.\n\nUpwork Profile: https://www.upwork.com/freelancers/~014fdabc6436bf9bd4?viewMode=1",

'country': 'IN',

'country_full_name': 'India',

'city': 'Mumbai',

'state': 'Maharashtra',

'experiences': [{'starts_at': {'day': 1, 'month': 2, 'year': 2022},

...

3.Formatting the data:

The data needs to be formatted properly before being fed to large language models, such as GPT-3.5-turbo or GPT-4, to witness the magic unfold!

Here's an example of how you can do it!🚀

# Extract information from the data3 dictionary

public_identifier = data3.get('public_identifier', '')

profile_pic_url = data3.get('profile_pic_url', '')

background_cover_image_url = data3.get('background_cover_image_url', '')

first_name = data3.get('first_name', '')

last_name = data3.get('last_name', '')

full_name = data3.get('full_name', '')

follower_count = data3.get('follower_count', 0)

occupation = data3.get('occupation', '')

headline = data3.get('headline', '')

summary = data3.get('summary', '')

country = data3.get('country', '')

country_full_name = data3.get('country_full_name', '')

city = data3.get('city', '')

state = data3.get('state', '')

experience_data = data3.get('experiences', [])

education_data = data3.get('education', [])

skills = data3.get('skills', '')

linkedin_profile_url = data3.get('linkedin_profile_url', '')

linkedin_recommendations_received = data3.get('linkedin_recommendations_received', 0)

linkedin_established_at = data3.get('linkedin_established_at', {})

linkedin_joined_at = data3.get('linkedin_joined_at', '')

# Store information in a single string variable

linkedin_info = f"Public Identifier: {public_identifier}\n"

linkedin_info += f"Profile Picture URL: {profile_pic_url}\n"

linkedin_info += f"Background Cover Image URL: {background_cover_image_url}\n"

linkedin_info += f"First Name: {first_name}\n"

linkedin_info += f"Last Name: {last_name}\n"

linkedin_info += f"Full Name: {full_name}\n"

linkedin_info += f"Follower Count: {follower_count}\n"

linkedin_info += f"Occupation: {occupation}\n"

linkedin_info += f"Headline: {headline}\n"

linkedin_info += f"Summary: {summary}\n"

linkedin_info += f"Country: {country}\n"

linkedin_info += f"Country Full Name: {country_full_name}\n"

linkedin_info += f"City: {city}\n"

linkedin_info += f"State: {state}\n"

def format_date(date_dict):

return f"{date_dict['day']} {date_dict['month']} {date_dict['year']}"

# Add experiences information to the string

linkedin_info += "\n\nExperiences:\n"

for experience in experience_data:

linkedin_info += f"Company: {experience['company']}\n"

linkedin_info += f"Title: {experience['title']}\n"

linkedin_info += f"Duration: {format_date(experience['starts_at'])} - "

if experience['ends_at']:

linkedin_info += f"{format_date(experience['ends_at'])}\n"

else:

linkedin_info += "Present\n"

linkedin_info += f"Description: {experience['description']}\n"

linkedin_info += f"LinkedIn Profile: {experience['company_linkedin_profile_url']}\n"

linkedin_info += f"Logo URL: {experience['logo_url']}\n"

linkedin_info += "\n"

# Add education information to the string

linkedin_info += "\nEducation:\n"

for education_entry in education_data:

linkedin_info += f"\n{education_entry['field_of_study']} ({education_entry['starts_at']['month']}/{education_entry['starts_at']['year']} - {education_entry['ends_at']['month']}/{education_entry['ends_at']['year'] if education_entry['ends_at'] else 'Present'}):\n"

linkedin_info += f"Degree: {education_entry['degree_name']}\n"

linkedin_info += f"School: {education_entry['school']}\n"

linkedin_info += f"Grade: {education_entry['grade']}\n"

linkedin_info += f"Activities and Societies: {education_entry['activities_and_societies']}\n"

# Extracting accomplishment projects

projects = "\nProjects:\n"

for project in data3.get("accomplishment_projects", []):

projects += f"{project['title']} ({project['starts_at']['month']}/{project['starts_at']['year']} - "

if project['ends_at']:

projects += f"{project['ends_at']['month']}/{project['ends_at']['year']})"

else:

projects += "Present)"

projects += f"\nDescription: {project['description']}\n\n"

# Extracting accomplishment test scores

test_scores = "\nTest Scores:\n"

for test_score in data3.get("accomplishment_test_scores", []):

test_scores += f"{test_score['name']}: {test_score['score']} ({test_score['date_on']['month']}/{test_score['date_on']['year']})\n"

# Extracting certifications

certifications = "\nCertifications:\n"

for certification in data3.get("certifications", []):

certifications += f"{certification['name']} - {certification['authority']} ({certification.get('url', 'No URL available')})\n"

# Combine all information into a single string

linkedin_info += f"{data3['full_name']} - Accomplishments\n\n{projects}\n{test_scores}\n{certifications}"

# Add skills information to the string

linkedin_info += f"\n\nSkills: {', '.join(skills)}\n"

# Display the final string

print(linkedin_info)

Response:

Public Identifier: pradipnichite

Profile Picture URL: https://s3.us-west-000.backblazeb2.com/proxycurl/person/pradipnichite/profile?X-Amz-Algorithm=AWS4-HMAC-SHA256&X-Amz-Credential=0004d7f56a0400b0000000001%2F20231226%2Fus-west-000%2Fs3%2Faws4_request&X-Amz-Date=20231226T140742Z&X-Amz-Expires=3600&X-Amz-SignedHeaders=host&X-Amz-Signature=31ed42fcde14ed8c34c58a44d7ce6140f710776d4c69f480427a6d01680912fa

Background Cover Image URL: https://s3.us-west-000.backblazeb2.com/proxycurl/person/pradipnichite/cover?X-Amz-Algorithm=AWS4-HMAC-SHA256&X-Amz-Credential=0004d7f56a0400b0000000001%2F20231226%2Fus-west-000%2Fs3%2Faws4_request&X-Amz-Date=20231226T140742Z&X-Amz-Expires=3600&X-Amz-SignedHeaders=host&X-Amz-Signature=c3a4363d504c75034096d6ebb0dd876c59a0b41c88e4560bd383d66ab09695f3

First Name: Pradip

Last Name: Nichite

Full Name: Pradip Nichite

Follower Count: 20901

Occupation: Founder & Lead Data Scientist at FutureSmart AI

Headline: Top Rated Plus - NLP Freelancer | Custom NLP Solutions | GPT-4 | AI Demos

...

4. Using OpenAI Models

Before utilizing OpenAI models, it is imperative to obtain an OpenAI API key and define the system prompt that aligns with the specific use case. To illustrate this process, we'll examine two distinct use cases:

a. Creating Personalized Messages for Hiring Talent:

Picture yourself in the role of a Hiring Manager searching for suitable candidates to fulfill your company's needs, while also drafting personalized messages to inspire them to become part of your workforce.

Here's how you can do it!🤔

i. Defining the system prompt:

system_prompt = "You are Adam Stokes, an HR Manager who wants to hire for the role of a Data Scientist at XYZ.AI. Based on the biodata available below, write a personalized message to offer the role to the person"

ii. Calling an OpenAI Model

Subsequently, we'll employ the GPT-4 model to generate a personalized message tailored to the candidate targeted by the HR Manager.

from openai import OpenAI

client = OpenAI(api_key='Your OPENAI_API_KEY') # Put your OpenAI API Key here

response = client.chat.completions.create(

model="gpt-4-1106-preview",

messages=[

{"role": "system", "content": system_prompt},

{"role": "user", "content": f"Biodata: {linkedin_info}"}

]

)

Response:

Subject: Exciting Opportunity Awaits You at XYZ.AI – Offer for ‘Data Scientist’ Position

Dear Pradip Nichite,

I hope this message finds you thriving and excel in your innovative pursuits at FutureSmart AI.

I am Adam Stokes, HR Manager at XYZ.AI, a pioneering company in AI and Machine Learning solutions. Our mission resonates with your passion for delivering cutting-edge NLP solutions, a dedication that doesn’t go unnoticed in the strides you have attained in the industry.

As we follow the evolution of AI closely, it’s evident that your extensive experience, particularly your remarkable tenure as a founder and lead data scientist at FutureSmart AI, is exactly the calibre of expertise we are eager to onboard at our firm.

We are impressed by the impressive array of projects under your belt – from sentiment analysis to automating the detection of diabetic retinopathy. Your proficiency with Python, NLP techniques, ML libraries, and A.I. frameworks, merged with a proven history of significant freelance success and impactful roles at reputable companies like Oracle and MiQ, ensures you're the embodiment of the skill set we are seeking for our next Data Scientist.

At XYZ.AI, we believe that you would not only continue to flourish professionally but also lead our team through the forefront of artificial intelligence innovations. As part of our team, you’ll have access to state-of-the-art resources, a diverse problem-solving playground, and opportunities to further influence the industry through our various channels.

We would be thrilled to discuss how your visions and our goals at XYZ.AI can align for a transformative collaboration. We’re confident that together, we can shape the future of AI applications and spearhead the new wave of intelligent solutions for our global clients.

Kindly let us know a convenient time for you to have an in-depth conversation about what this role entails and how XYZ.AI envisions your involvement in advancing our frontier in A.I.

We eagerly await the prospect of you bringing your strategic insight, technical mastery, and inspiring leadership to our dynamic team.

Warm regards,

Adam Stokes

HR Manager, XYZ.AI

[Company Contact information]

[LinkedIn Profile URL]

b. Personalised Email Marketing Campaigns:

Imagine yourself assuming the role of a passionate salesperson at your company, tasked with captivating the end user and driving product sales through email marketing.

By understanding the language and preferences of our target audience through NLP, we can create personalized marketing campaigns that resonate deeply. Imagine product ads tailored to each customer's needs and interests, leading to higher conversions and brand loyalty.

Here's how you can do it!🚀

i. Defining the system prompt:

system_prompt = "You are Ben Grantt, a salesperson in a company called GranttCourses that sells educational courses. Based on the available biodata, craft a message to pitch a course to the user and how it would benefit them in their career."

ii. Calling an OpenAI Model

Subsequently, we'll employ the GPT-4 model to generate a personalized message tailored to the targeted customer.

from openai import OpenAI

client = OpenAI(api_key='Your OPENAI_API_KEY') # Put your OpenAI API Key here

response = client.chat.completions.create(

model="gpt-4-1106-preview",

messages=[

{"role": "system", "content": system_prompt},

{"role": "user", "content": f"Biodata: {linkedin_info}"}

]

)

Response:

Subject: Take Your NLP and AI Expertise to New Heights with GranttCourses

Hi Pradip,

I hope this message finds you well. I took the liberty to explore your impressive professional journey and your contributions to the realm of data science and AI. As a founder and lead data scientist of FutureSmart AI, as well as a noted freelancer with exemplary accomplishments, your dedication toward advancing in machine learning and NLP is clear and inspiring.

While you are evidently at the forefront of AI technology, especially in the NLP niche, continuous learning is what keeps such experts as yourself at the top of the game. At GranttCourses, we specialize in providing advanced educational courses tailored for thought leaders and innovators in the field of AI.

The reason for my reach out is to introduce you to our latest course offering: "Advanced NLP with Deep Learning." This course is not your run-of-the-mill educational material; it is specifically designed to enhance already strong foundations, like yours, with the latest breakthroughs and in-depth understanding of advanced neural network architectures, sequence modeling, and state-of-the-art NLP applications.

Here’s what you can expect from this course:

- Deep dive into transformer architectures and how they are revolutionizing the NLP space.

- Applying BERT and GPT models towards creating more nuanced text generation and comprehension systems, moving beyond the already sophisticated systems you've expertly fine-tuned.

- Hands-on projects that mirror real-world challenges, allowing you to directly apply your skills to solve complex problems efficiently.

Additionally, we offer live sessions with industry experts, including individuals who have contributed to seminal AI research. This will not only equip you with cutting-edge knowledge but also the opportunity to network with fellow AI pioneers and discuss potential collaborations or innovations.

Bearing in mind your extensive experience with PyTorch, Transformers, and similar libraries, this course might offer you the incremental updates and insights that could be instrumental in FutureSmart AI's endeavors, or in the personal brand you are creating through your educational platform, AI Demos.

As data science and machine learning continue to evolve, staying current with the latest techniques and theories isn’t just valuable, it's essential for maintaining the competitive edge that has marked your career so brilliantly. And with your mission to educate others, what you gain from this course could transcend your own knowledge, fostering the growth of your audience and client base who look to your expertise to understand the future of AI.

Let's discuss how GranttCourses can be a part of your continued success.

Best regards,

Ben Grantt

Sales Executive

GranttCourses

[Your Contact Information]

Boom! These amazing tools unlock superpowers for our business! We can snag the dream team of talented peeps and blast our products to the moon with rocket-powered APIs and LLMs! It's game-changing, game-winning, and totally awesome!

5. Conclusion:

With scraping and NLP, the power to shape your professional future is right at your fingertips. So, dive in, explore, and unleash the hidden gems within your LinkedIn data. Remember, the sky's not the limit – it's just the launchpad for your success!

If your company is looking to embark on a similar journey of transformation and you're in need of a tailored NLP solution, we're here to guide you every step of the way. Our team at FutureSmart AI specializes in crafting custom NLP applications, including generative NLP, RAG, and ChatGPT integrations, tailored to your specific needs.

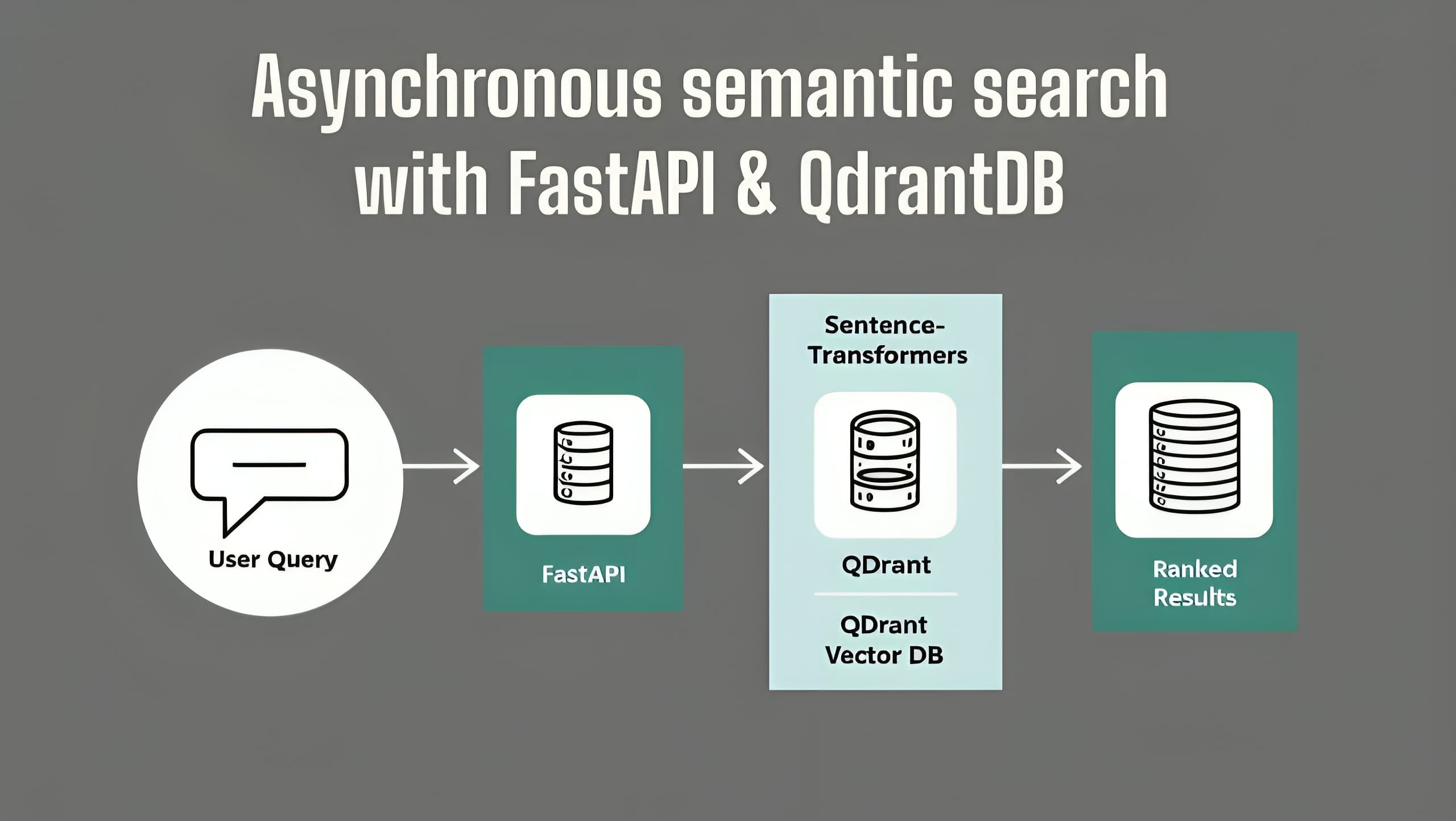

You can also refer to these resources for creating a complete backend application using ChatGPT and deploy it on AWS: Beginner's Guide to FastAPI & OpenAI ChatGPT API Integration | Code and Deploy FastAPI & Open AI ChatGPT on AWS EC2: A Comprehensive Step-by-Step Guide 🚀 .

Ditch the manual scraping and unlock automation, efficiency, and deeper connections with your target audience. Dive into the world of GenAI-powered LinkedIn data analysis and discover how it can drive personalized outreach, optimize marketing campaigns, and propel your business forward. Reach out to us at contact@futuresmart.ai and let's discuss how we can build a smarter, more responsive system that aids in targeted marketing or finding the best talent for your company. Join the ranks of forward-thinking companies leveraging the best of AI and see the difference for yourself!

Stay Connected with FutureSmart AI for the Latest in AI Insights - FutureSmart AI

Eager to stay informed about the cutting-edge advancements and captivating insights in the field of AI? Explore AI Demos, your ultimate destination for staying abreast of the newest AI tools and applications. AI Demos serves as your premier resource for education and inspiration. Immerse yourself in the future of AI today by visiting aidemos.com.