NLP Roadmap 2023: Step-by-Step Guide

🚀 I'm a Top Rated Plus NLP freelancer on Upwork with over $300K in earnings and a 100% Job Success rate. This journey began in 2022 after years of enriching experience in the field of Data Science. 📚 Starting my career in 2013 as a Software Developer focusing on backend and API development, I soon pursued my interest in Data Science by earning my M.Tech in IT from IIIT Bangalore, specializing in Data Science (2016 - 2018). 💼 Upon graduation, I carved out a path in the industry as a Data Scientist at MiQ (2018 - 2020) and later ascended to the role of Lead Data Scientist at Oracle (2020 - 2022). 🌐 Inspired by my freelancing success, I founded FutureSmart AI in September 2022. We provide custom AI solutions for clients using the latest models and techniques in NLP. 🎥 In addition, I run AI Demos, a platform aimed at educating people about the latest AI tools through engaging video demonstrations. 🧰 My technical toolbox encompasses: 🔧 Languages: Python, JavaScript, SQL. 🧪 ML Libraries: PyTorch, Transformers, LangChain. 🔍 Specialties: Semantic Search, Sentence Transformers, Vector Databases. 🖥️ Web Frameworks: FastAPI, Streamlit, Anvil. ☁️ Other: AWS, AWS RDS, MySQL. 🚀 In the fast-evolving landscape of AI, FutureSmart AI and I stand at the forefront, delivering cutting-edge, custom NLP solutions to clients across various industries.

Are you a beginner data scientist or someone who wants to pursue a career in Natural Language Processing (NLP)? If so, then this blog post is for you. In this post, we will discuss how you can approach NLP, the step-by-step process you should follow, the resources you can refer to, and the kind of problems you can solve with NLP knowledge.

Prerequisite to NLP

Before diving into NLP, it is important to have a basic understanding of machine learning. You should know what machine learning is, the concepts of supervised and unsupervised learning, and the different algorithms such as classification, regression, and clustering.

Assuming you are already familiar with machine learning, let's now proceed to discuss NLP in detail.

You can start learning machine learning from the famous Andrew Ng course.

Step 1: Text Pre-Processing

Text pre-processing is the first step in working with NLP. It involves cleaning and transforming raw text data into a format that can be easily analyzed by machine learning algorithms. Some common tasks in text pre-processing include tokenization, lemmatization, and removing punctuation.

To perform text pre-processing, you can use libraries like SpaCy or NLTK. These libraries provide functions to tokenize text, perform lemmatization, and remove punctuation.

Here is good tutorial to learn spacy

Step 2: Text Representation

After pre-processing the text, the next step is to convert it into a format that machine learning models can understand. This involves representing the text as numerical vectors.

There are several techniques for text representation, including the Bag of Words model, Count Vectorization, and TF-IDF. In addition, more advanced techniques like Word2Vec and Doc2Vec can be used for word embedding.

It is important to have a good understanding of these text representation techniques as they are fundamental to working with NLP models.

Resources:

Build Your First NLP Model: Text Feature Extraction: Bag of words and TF-IDF

Build Text Classification Model using Word2Vec | Gensim

Step 3: Information Extraction

Information extraction involves extracting important information from text, such as named entities (e.g., names of persons, organizations, places) and part-of-speech tagging.

To perform information extraction, you can use libraries like SpaCy that provide pre-trained models for entity recognition and part-of-speech tagging. However, if you have a specific domain or custom entities to extract, you may need to train your own custom-named entity recognition model.

Resources:

Learn How to Build a Custom Named Entity Recognition (NER) model using spacy

Step 4: Deep Learning for NLP

To take your NLP skills to the next level, it is recommended to learn about deep learning algorithms and how they can be applied to NLP tasks. This involves understanding neural networks, backpropagation, and transfer learning.

There are several popular courses available online, such as the courses by Andrew Ng on Coursera or deeplearning.ai, that cover deep learning for NLP. These courses will provide a good foundation in neural networks and help you understand transfer learning techniques.

Resources:

The Deep Learning Specialization

Step 5: Transformers and Transfer Learning

Why Transformers?

Transformers are the backbone of many NLP models and have revolutionized the field with their exceptional ability to understand text data. Libraries like the Transformers library offer a variety of these models, including BERT and T5, ready for fine-tuning and deployment.

The Power of Transfer Learning

Transfer learning allows you to take these pre-trained transformer models and fine-tune them for specific tasks. Whether you’re dealing with custom text classification, sentiment analysis, or Named Entity Recognition, a fine-tuned transformer model can be an invaluable asset.

Learning Resources

To dive deeper into these topics, the blog post "The Illustrated Transformer" provides an excellent visual guide to understanding how transformers work.

Action Items

Learn How to Use Pre-Trained Models: Familiarize yourself with how to deploy pre-trained models for standard tasks. These are available in various libraries and can serve as robust starting points.

Learn How to Fine-Tune Transformer Models: Once you understand the basics, the next step is to learn how to fine-tune these models on custom datasets. This is especially crucial for tasks that require a specialized understanding of the data.

By mastering Transformers and Transfer Learning, you arm yourself with powerful tools that can significantly expedite your NLP projects.

Resources:

Learn How to use Hugging face Transformers Library

Fine Tune Transformers Model on Custom Dataset.

Step 6: Deploying NLP Models

Once you have trained and fine-tuned your NLP models, the next step is to deploy them so that they can be accessed by others. This can be done by creating an API for the model and hosting it on a cloud platform like AWS, Google Cloud, or Azure.

There are several frameworks and tools available for deploying NLP models, such as Flask or FastAPI. These frameworks allow you to create APIs and handle HTTP requests and responses.

Resources:

Deploy GPT Streamlit App on AWS EC2

Deploy FastAPI & Open AI ChatGPT on AWS EC2

Deploy Fine Tuned BERT or Transformers model on Streamlit Cloud

Creating a GPT Product Description Generator with AWS Lambda and API Gateway

Step 7: Embedding and Semantic Search

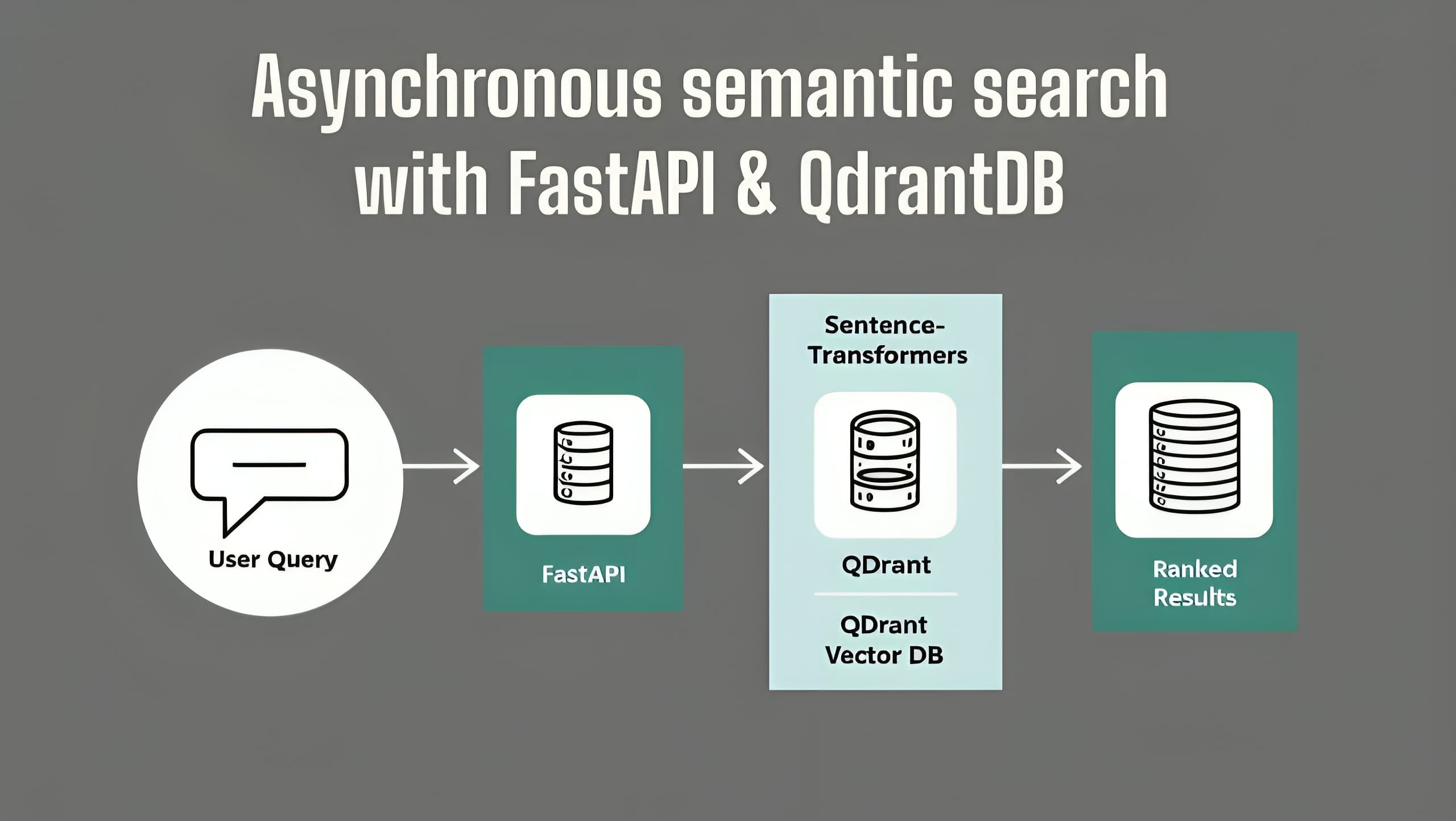

Embedding refers to converting text into vector representations that can be used for semantic search and comparison. By representing text as vectors, you can compare and measure the similarity between different documents or queries.

There are open-source libraries like Sentence Transformers that provide pre-trained models for text embedding. These models can be used to create embeddings and perform semantic search.

Resources:

Sentence Transformers: Sentence Embedding, Sentence Similarity, Semantic Search and Clustering

Build high-performance Semantic Search applications using Vector Databases

Step 8: Large Language Models (LLMs)

Large language models like GPT-3 and GPT-4 have gained popularity in the field of NLP. These models are capable of generating human-like text and can be fine-tuned for various NLP tasks.

To work with LLMs, you can use libraries like OpenAI's GPT or Facebook's Llama 2. These libraries provide interfaces and utilities for working with LLMs and integrating them into your NLP applications.

Resources:

Open AI ChatGPT, GPT-4, GPT-3 Playlist

ChatGPT Prompt Engineering for Developers

Step 9: Vector databases

Vector databases enable efficient searching and retrieval of similar text vectors, making them a valuable tool in NLP applications. Furthermore, the integration of vector databases with large language models and the ability to fine-tune them allows for more advanced NLP functionalities, such as question-answering systems and conversational AI. Overall, vector databases play a crucial role in enhancing the performance and efficiency of NLP applications.

Semantic Search with Open-Source Vector DB: Chroma DB

Step 10: LLM Libraries:

As Large Language Models (LLMs) like GPT variants continue to dominate the NLP space, the need for specialized libraries that can streamline the implementation of these models is ever-increasing. Two such libraries that have gained considerable traction are the LangChain and Llama Index. Here's what you need to know about these popular tools and how they can supercharge your NLP projects.

LangChain: Your One-Stop Shop for LLM Applications

LangChain offers a comprehensive suite of utilities designed to simplify the building of applications around Large Language Models. Whether you're looking to chunk PDF files, interface with vector databases, or execute more complex tasks like Natural Language to SQL conversions, LangChain has got you covered.

Features:

PDF Chunking: Efficiently divide large PDF files into manageable pieces.

Interface with LLMs: Seamless integration with popular Large Language Models like those from OpenAI.

LangChain SQL Agent: A specialized component for Natural Language to SQL conversions.

Llama Index: A Flexible Library for Data Augmentation and Indexing

Llama Index serves a somewhat overlapping but distinct role compared to LangChain. Its core strength lies in connecting to various data sources and indexing documents to augment the capabilities of Large Language Models.

Features:

Multiple Connectors: Easily connect to data sources like Google Docs, Notion, and PDF files.

Advanced Indexing: Offers multiple methods to index documents, from simple list indexes to more complex tree structures and table keyword indexes.

Use-Cases:

Llama Index is ideal for projects that require advanced semantic search capabilities, thanks to its robust indexing features.

Resources

I've created several videos diving into the capabilities of LangChain and Llama Index. Whether you're interested in building a Natural Language to SQL interface or experimenting with advanced document indexing, these resources can guide you through the process.

Building a Document-based Question Answering System with LangChain, Pinecone, and LLMs like GPT-4.

Mastering LlamaIndex : Create, Save & Load Indexes, Customize LLMs, Prompts & Embeddings

LangChain, SQL Agents & OpenAI LLMs: Query Database Using Natural Language

Step 11: MLOps for NLP

MLOps, or Machine Learning Operations, involves deploying, monitoring, and managing machine learning models in production. In the context of NLP, MLOps includes monitoring the performance of NLP models, gathering user feedback, and continuously improving the models.

There are various MLOps tools available, such as Kubeflow, MLflow, and Weight and Biases, that can help you with model deployment, monitoring, and feedback collection.

Resources:

Machine Learning Engineering for Production (MLOps) Specialization

Evaluating and Debugging Generative AI Models Using Weights and Biases

Step 12: Relational Databases and SQL

Understanding relational databases and SQL is important for handling data and storing predictions from NLP models. As part of an end-to-end NLP application, you may need to interact with a database to store and retrieve data for your NLP tasks.

Commonly used relational databases include MySQL and PostgreSQL. Having a good understanding of databases and SQL will enable you to handle data efficiently and effectively.

Conclusion

In this blog post, we discussed the step-by-step roadmap for beginners to approach NLP. We covered topics like text pre-processing, text representation, information extraction, deep learning for NLP, deploying NLP models, embedding and semantic search, large language models, MLOps for NLP, and working with relational databases.

Although this roadmap provides a high-level overview, it is important to explore each topic in detail and gain practical experience by working on NLP projects. There are numerous online resources, courses, and libraries available to help you learn and apply NLP techniques effectively.

If you want to dive deeper into any specific topic, refer to the video transcript provided in this blog post, as it contains links to specific videos and resources related to each topic.

Remember, NLP is a rapidly evolving field, and staying updated with the latest research and developments is crucial. Happy learning and exploring the exciting world of Natural Language Processing!