Semantic Search using LlamaIndex and Langchain

Introduction:

We all know it's the era of Large Language Models (LLMs) like GPT4. However, as powerful as these models are, they can be further improved through innovative techniques like semantic search. In this blog post, we will explore why combining semantic search with GPT offers a superior approach compared to simply fine-tuning GPT. We'll discuss the benefits of using tools like LlamaIndex and Langchain and walk you through the process of building your own custom solution. By delving into code examples, data collection, and the integration of custom embeddings with Pinecone, you'll learn how to leverage these advanced technologies to create a powerful Q&A bot and enhance your natural language processing capabilities. Let's embark on this exciting journey to unlock the full potential of GPT and semantic search!

If you want to learn more about semantic search, feel free to look into these videos from FutureSmart AI.

Revolutionizing Search: How to Combine Semantic Search with GPT-3 Q&A

GPT-3 Embeddings: Perform Text Similarity, Semantic Search, Classification, and Clustering

Why is Semantic Search + GPT better than finetuning GPT?

Semantic search is a method that aids computers in deciphering the context and meaning of words in the text. To do this, the connections between words and sentences are examined to determine their underlying meaning. It may be a potent tool for natural language processing when paired with GPT, a pre-trained language model that can produce cohesive and natural-sounding text.

The semantic search technique is more generic and doesn't require specific training data, in contrast to fine-tuning GPT, which entails training the model on a particular task using annotated data. All text data may be subjected to semantic search, which considers the meaning and context of the words to provide more complex searches and results.

By leveraging the natural language understanding capabilities of semantic search and the contextual understanding of GPT, this approach can be used for a wide range of natural languages processing tasks such as question answering, chatbots, and content recommendation. Overall, compared to fine-tuning GPT alone, semantic search + GPT offers a more potent and adaptable method for natural language processing tasks.

Why use LlamaIndex and Langchain?

Two potent methods used in natural language processing to enhance the search and retrieval of pertinent information are the GPT index and Langchain.

A pre-trained language model, such as GPT, is used to create a GPT index, which is a way of indexing a huge corpus of text. It is simpler and quicker to search for and retrieve pertinent information because of the index, which contains representations of each document that encapsulate its semantic meaning. This is especially helpful when consumers need to locate specific information fast when there is a lot of text data present, such as in e-commerce or content-based websites.

How to build it?

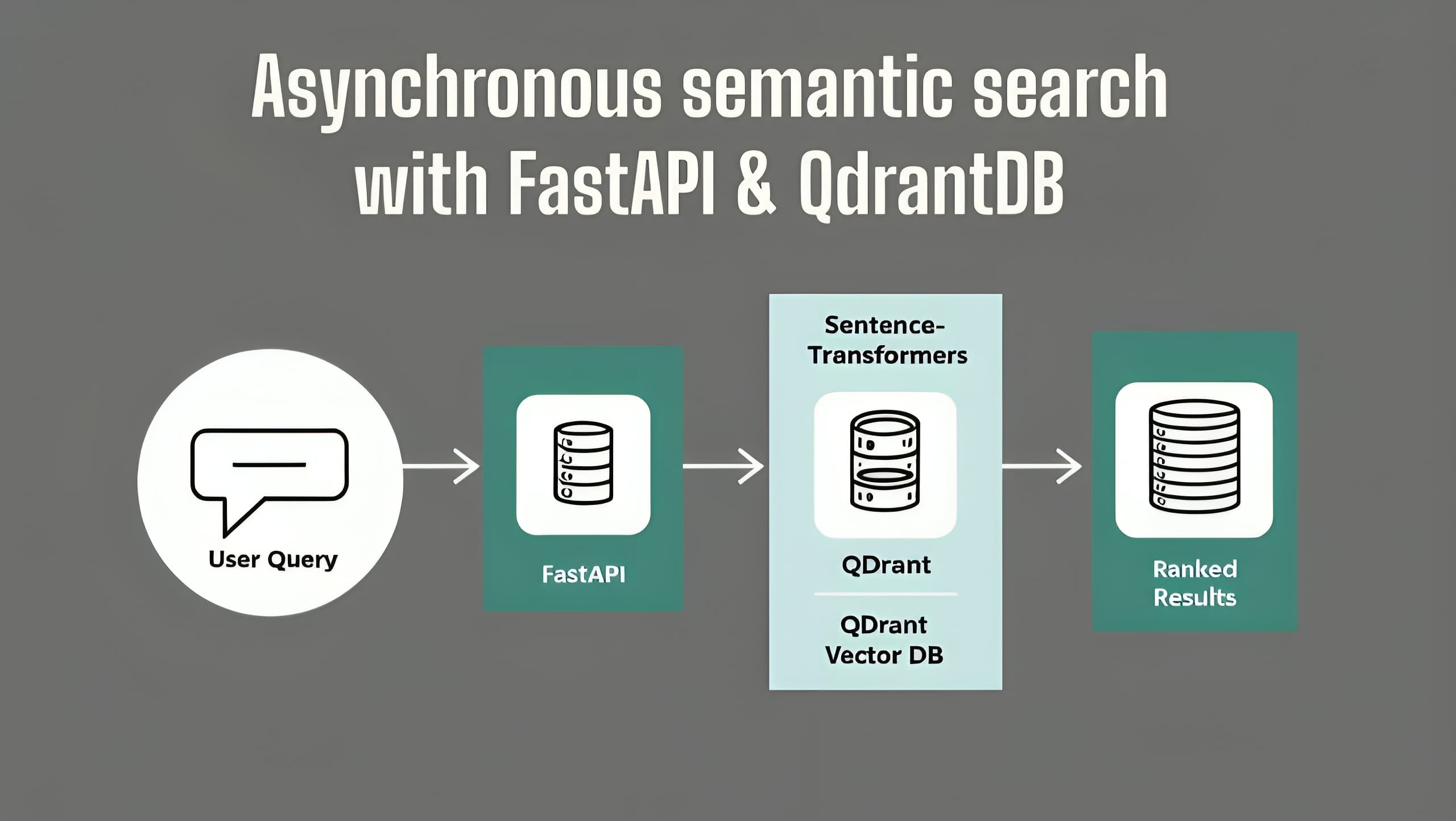

The idea is that we will build a database of the corpus we collect from multiple sources, which may include data from clients, crowdsourcing, or even web crawling.

Once we build the data, we want to store the embeddings somewhere so that it becomes easy for us to fetch embeddings and find similarities with the query we send in.

There are various tools to do this, some prominent ones being, Qdrant, Pinecone, and many more.

- These are vector search engines which are essentially APIs which can store the data we embed to their clouds and call them whenever we need them.

We also have LlamaIndex which has both the functionality of APIs and local storage in the form of JSON files.

Let's code some stuff...

Collecting the data.

Let's download the book "Alice in Wonderland" and do embedding on that.

!wget https://www.gutenberg.org/cache/epub/11/pg11.txt

Install the requirements

!pip install langchain

!pip install llama_index

!pip install openai

!pip install transformers

!pip install torch

!pip install sentence_transformers

!pip install pinecone-client

Let's get coding...

To create a chat application for a given use case, we need to set up a few components.

"prompt helper": This is a class which takes in parameters like:

max_input_size: This is the largest length of tokens for the input string.max_chunk_overlap: Maximum chunk overlap for LLMnum_output: Number of outputs for the LLM.chunk_size_limit: Maximum chunk size to use.

"LLM Predictor": This class creates an encapsulation of the model to be used in LLM.

The only input it needs is the predictor itself.

Generally, we tend to use OpenAI itself.

from langchain import OpenAI

from llama_index import LLMPredictor

llm_predictor = LLMPredictor(

llm=OpenAI(

temperature=0,

model_name='text-ada-001',

max_tokens=num_outputs

),

)

- Documents: This is the class which encapsulated the data/text in various formats like text files, directory of text files and whatnot.

from gpt_index import SimpleDirectoryReader

documents = SimpleDirectoryReader(directory_path).load_data()

- Vector Indexing: Once, the document is created, we need to index them to process through the semantic search process.

from llama_index import GPTSimpleVectorIndex

index = GPTSimpleVectorIndex([])

for doc in documents:

index.insert(doc)

These are the basic things we need to have to essentially build a chatbot.

Now, we'll take a look at a few examples. One basic example and one with Pinecone integration to store the data on the cloud.

Examples

In this section, we will look at 2 examples.

Q&A Bot using the functions directly out from the documentation

Incorporating Custom Embeddings and uploading the data to Pinecone.

Q&A Bot straight out of the docs

Download the Data

!wget https://www.gutenberg.org/cache/epub/11/pg11.txt

Move the above data to a data directory so that it would be easier for later processing.

Imports

from llama_index import GPTSimpleVectorIndex, SimpleDirectoryReader

import openai

Configure OpenAI

Go to openai.com and get your API key and write this piece of code before the next cell.

import os

os.environ['OPENAI_API_KEY'] = '<OPENAI-API-KEY>'

As of now, the directory structure should be somewhat similar to this:

- directory

- data

- data.txt

- notebook.ipynb

Documents

Now, we can build the documents like this:

documents = SimpleDirectoryReader('data').load_data()

Indexing

Once, the documents are ready and done, we want to index them based on the similarity of the query and the documents. This can be done like this:

index = GPTSimpleVectorIndex(documents)

Querying from the Index

response = index.query("Who is Alice?")

print(response)

This would throw some output like this:

Alice is a young girl who is curious and imaginative. She is exploring a rabbit hole and finds herself in a strange world filled with strange creatures and events. She is trying to find her way out of the rabbit hole and back home.

And you can go about any question.

response = index.query("What is the story about?")

print(response)

Alice's Adventures in Wonderland is a story about a young girl named Alice who falls down a rabbit hole and finds herself in a strange and magical world. She meets a variety of strange creatures, including the White Rabbit, the Cheshire Cat, the Mad Hatter, the Queen of Hearts, and the Mock Turtle. Through her adventures, Alice learns valuable lessons about life and growing up. The story is available in a variety of formats, including "Plain Vanilla ASCII" and other formats, and can be accessed and distributed in a variety of ways, including in binary, compressed, marked up, nonproprietary or proprietary forms.

Something funny here is, you get this part in the answer which makes no sense towards the end because, if you look at the text file, there's a lot of text which talks about using data.

Saving the data

# save to disk

index.save_to_disk('index.json')

Loading the data

# load from disk

index = GPTSimpleVectorIndex.load_from_disk('index.json')

Using Custom Embeddings + Integrating with Pinecone

Imports

from langchain.embeddings.huggingface import HuggingFaceEmbeddings

from llama_index import GPTPineconeIndex, SimpleDirectoryReader

from llama_index import GPTListIndex, SimpleDirectoryReader, GPTSimpleVectorIndex

from langchain.embeddings.huggingface import HuggingFaceEmbeddings

from llama_index import LangchainEmbedding

import pinecone

What is Pinecone?

A cloud-based vector database called Pinecone offers a quick and easy way to search and retrieve high-dimensional data, such as embeddings produced by machine learning algorithms. It is suited for real-time applications since it is scalable and effective in design.

Pinecone enables users to build high-dimensional data indexes that may be searched with closest neighbour queries to bring up related data pieces. It supports a wide range of data kinds, including text, numbers, and graphics.

Pinecone makes it simple to connect with current applications by offering APIs for several programming languages, such as Python, Java, and Go. Moreover, it has built-in support for well-known machine learning frameworks like TensorFlow and PyTorch, making it simple for users to index and search embeddings produced by these frameworks.

If you want to learn more about Vector Databases take a look at these videos:

Build high-performance Semantic Search applications using Vector Databases

Revolutionizing Search: How to Combine Semantic Search with GPT-3 Q&A

Pinecone and OpenAI Config

import os

os.environ['PINECONE_API_KEY'] = '<pinecone-api-key>'

os.environ['PINECONE_ENVIRONMENT'] = '<pinecone-environment>'

os.environ['OPENAI_API_KEY'] = '<openai-api-key>'

Instantiate Pinecone Index

pinecone.init(

api_key=os.environ['PINECONE_API_KEY'],

environment=os.environ['PINECONE_ENVIRONMENT']

)

pinecone.create_index(

"quickstart",

dimension=768,

metric="euclidean",

pod_type="p1"

)

Custom Embeddings

To get custom embeddings we use Sentence Transformers. If you want to learn more about sentence transformers take a look at this video.

embed_model = LangchainEmbedding(HuggingFaceEmbeddings(model_name="clips/mfaq"))

len(embed_model.get_text_embedding('What is Embeddings?')) # Output: 768

Access the Index

index = pinecone.Index("quickstart")

Create Documents

documents = SimpleDirectoryReader('./data').load_data()

Create indexes in the Pinecone Index

index = GPTPineconeIndex(

documents,

embed_model=embed_model,

pinecone_index=index

)

Querying

response = index.query("What is life?")

print(response)

This is a very basic example of LlamaIndex and how to upload the index to Pinecone. Please upvote if you like the article.

To learn about more interesting and cool applications of LLMs look into our other Blogs and YouTube channel.

Also, want to learn about the state-of-the-art stuff in AI? Don't forget to subscribe to AI Demos. A place to learn about the latest and cutting-edge tools in AI!